How Does Search Engine Indexing Work?

Before indexing a website the search engine crawls it to investigate the links and content. The crawled content is taken by the search engine and indexed. Search engine indexing is the process where a search engine organises and stores the online content in its central database.

Search engines first crawl the online content and then categorise it. The crawlers are bots that follow links and scan the websites and gather data. The data is delivered to the search engine servers to be indexed.

Every time you publish or update content the search engine crawls and indexes it. Crawlers gather data like keywords, date published, images and video files. Search engines analyse the relationship between the different pages and websites by following internal links and external URLs.

Search engine bots crawl and index your page on a crawl budget. The crawl budget decides how many pages will be crawled and indexed in a specific time period.

The crawlers do not crawl all the pages on a website. They follow the ‘do follow’ links ignoring the ‘nofollow’ ones. If there are external links from high-quality websites then it will pass the ‘link juice’ as the crawlers pass from another website to yours.

Some of the content is not crawlable by search engines like pages hidden behind login forms, text embedded within images and passwords.

Tool for indexing

These tools can be used to tell Google how to crawl and index your website:

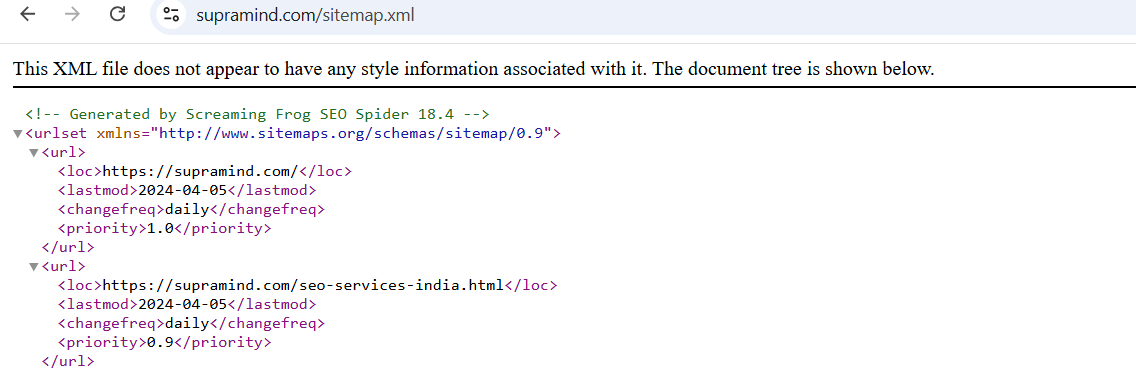

Sitemaps

There are two types of sitemaps HTML and XML. The HTML sitemap lists all the content on your website which is usually placed at the website’s footer. The XML sitemap contains a list of all the essential pages of your website. It is submitted to the search engine so that it can crawl and index your content.

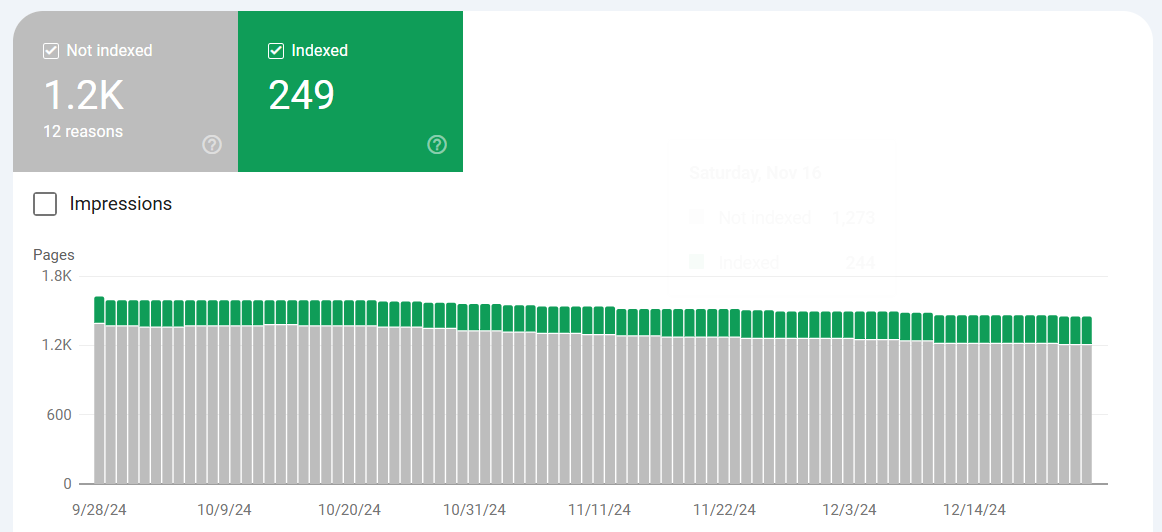

Google Search Console

You can look at the ‘Index coverage’ report to know which of the pages have been indexed by Google. The report also highlights any issues. You can submit your XML sitemap to Google Search Console. It acts as a roadmap and helps Google to index your content more effectively.

Alternative search engine consoles

There are other search engines other than Google like Bing, Yandex, Yahoo and more. Limiting yourself to only one search engine will be like closing yourself from the traffic from alternative sources. Each of the search engines has different tools for monitoring your website’s indexing.

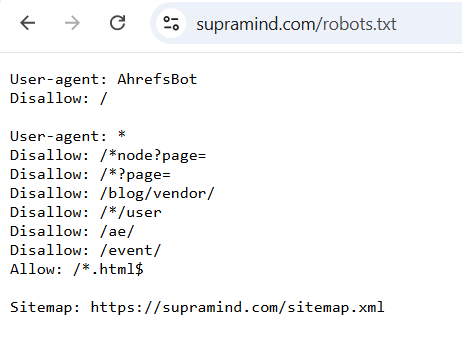

Robots.txt

A XML sitemap is used to tell the search engines to index specific pages on your website. You can use the robots.txt file to exclude certain content. The file includes the indexation information about your website and is stored in your root directory. You can use the ‘Disallow’ directive to block particular pages from being indexed.

How to get your website indexed better?

To get your website indexed better you should create an XML sitemap and submit it to multiple search engines. Other ways are that you should make your website mobile-friendly and optimise it for speed to make crawling and indexing faster.

| Stage | Process | Percentage of Importance | Average Time Frame | Common Tools/Techniques |

| 1. Crawling | Discovering web pages through links or sitemaps. | 40% | Continuous (minutes to weeks) | Web crawlers (e.g., Googlebot, Bingbot). |

| 2. Parsing | Analyzing the HTML content of a page. | 10% | Seconds to minutes | HTML parsers, metadata extraction tools. |

| 3. Storing Data | Saving crawled data in the search index. | 20% | Milliseconds to seconds | Databases, file systems (e.g., Bigtable). |

| 4. Indexing | Organizing data by keywords, metadata, etc. | 25% | Milliseconds to seconds | Inverted indexing, keyword ranking systems. |

| 5. Ranking (Preparation) | Prioritizing pages based on relevance and quality. | 5% | Continuous | Algorithms (e.g., PageRank, semantic analysis). |

To optimise your website for better performance you can hire SEO services.

- Log in to post comments

INDIA

INDIA